What does pragma OMP parallel for do?

The pragma omp parallel is used to fork additional threads to carry out the work enclosed in the construct in parallel. The original thread will be denoted as master thread with thread ID 0. Example (C program): Display “Hello, world.” using multiple threads.

What is loop parallelism in OpenMP?

Write a program for this, and parallelize it using OpenMP parallel for directives. Put a parallel directive around your loop. Put a critical directive in front of the update. (Yes and very much no.) Remove the critical and add a clause reduction (+:quarterpi) to the for directive.

How do you parallelize a nested loop?

How to decide how to parallelize nested loops on GPU

- Run all main iterations in parallel, and all inner loop sequentially.

- Run all main iterations sequentially, and all inner loop in parallel.

- Run all main iterations in parallel, two of the inner loops in parallel and the rest sequentially.

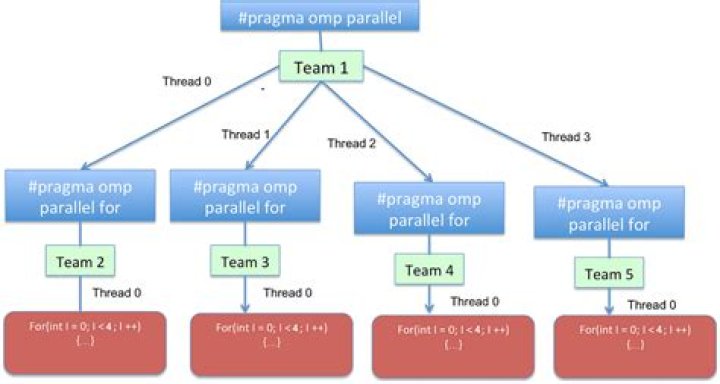

What is nested parallelism?

OpenMP parallel regions can be nested inside each other. If nested parallelism is disabled, then the new team created by a thread encountering a parallel construct inside a parallel region consists only of the encountering thread. If nested parallelism is enabled, then the new team may consist of more than one thread.

How does Pragma OMP for work?

A section of code that is to be executed in parallel is marked by a special directive (omp pragma). Each thread executes the parallel section of the code independently. When a thread finishes, it joins the master. When all threads finish, the master continues with code following the parallel section.

What is Pragma OMP parallel private?

!$OMP parallel private(x) The private directive declares data to have a separate copy in the memory of each thread. Such private variables are initialized as they would be in a main program. Any computed value goes away at the end of the parallel region.

How does OMP parallel for work?

The slave threads all run in parallel and run the same code. Each thread executes the parallelized section of the code independently. When a thread finishes, it joins the master. When all threads finished, the master continues with code following the parallel section.

What is Omp_set_nested?

The omp_set_nested subroutine enables or disables nested parallelism. TRUE., nested parallelism is enabled. Parallel regions that are nested can deploy additional threads to the team. It is up to the runtime environment to determine whether additional threads should be deployed.

What is Omp_set_dynamic?

omp_set_dynamic. Indicates that the number of threads available in upcoming parallel regions can be adjusted by the run time. omp_get_dynamic. Returns a value that indicates if the number of threads available in upcoming parallel regions can be adjusted by the run time.

What does OMP single do?

Single: Lets you specify that a section of code should be executed on a single thread, not necessarily the master thread. Critical: Specifies that code is only be executed on one thread at a time.

How does OMP parallel work?

When run, an OpenMP program will use one thread (in the sequential sections), and several threads (in the parallel sections). When the execution reaches a parallel section (marked by omp pragma), this directive will cause slave threads to form. Each thread executes the parallel section of the code independently.

What is pragma OMP critical?

Purpose. The omp critical directive identifies a section of code that must be executed by a single thread at a time.

How do I enable nested parallelism in OMP?

You can enable nested parallelism with omp_set_nested (1); and your nested omp parallel for code will work but that might not be the best idea. By the way, why the dynamic scheduling? Is every loop iteration evaluated in non-constant time? NO.

How to parallelize only the outer loop in OpenMP?

The lines you have written will parallelize only the outer loop. To parallelize both you need to add a collapseclause: #pragma omp parallel for collapse(2) for (int i=0;i

Should I use for or for Pragma in OpenMP?

A more natural option is to use the for pragma: This has several advantages. For one, you don’t have to calculate the loop bounds for the threads yourself, but you can also tell OpenMP to assign the loop iterations according to different schedules (section 18.3 ).

How do parallel threads work in OMP?

The first #pragma omp parallel will create a team of parallel threads and the second will then try to create for each of the original threads another team, i.e. a team of teams. However, on almost all existing implementations the second team has just only one thread: the second parallel region is essentially not used.